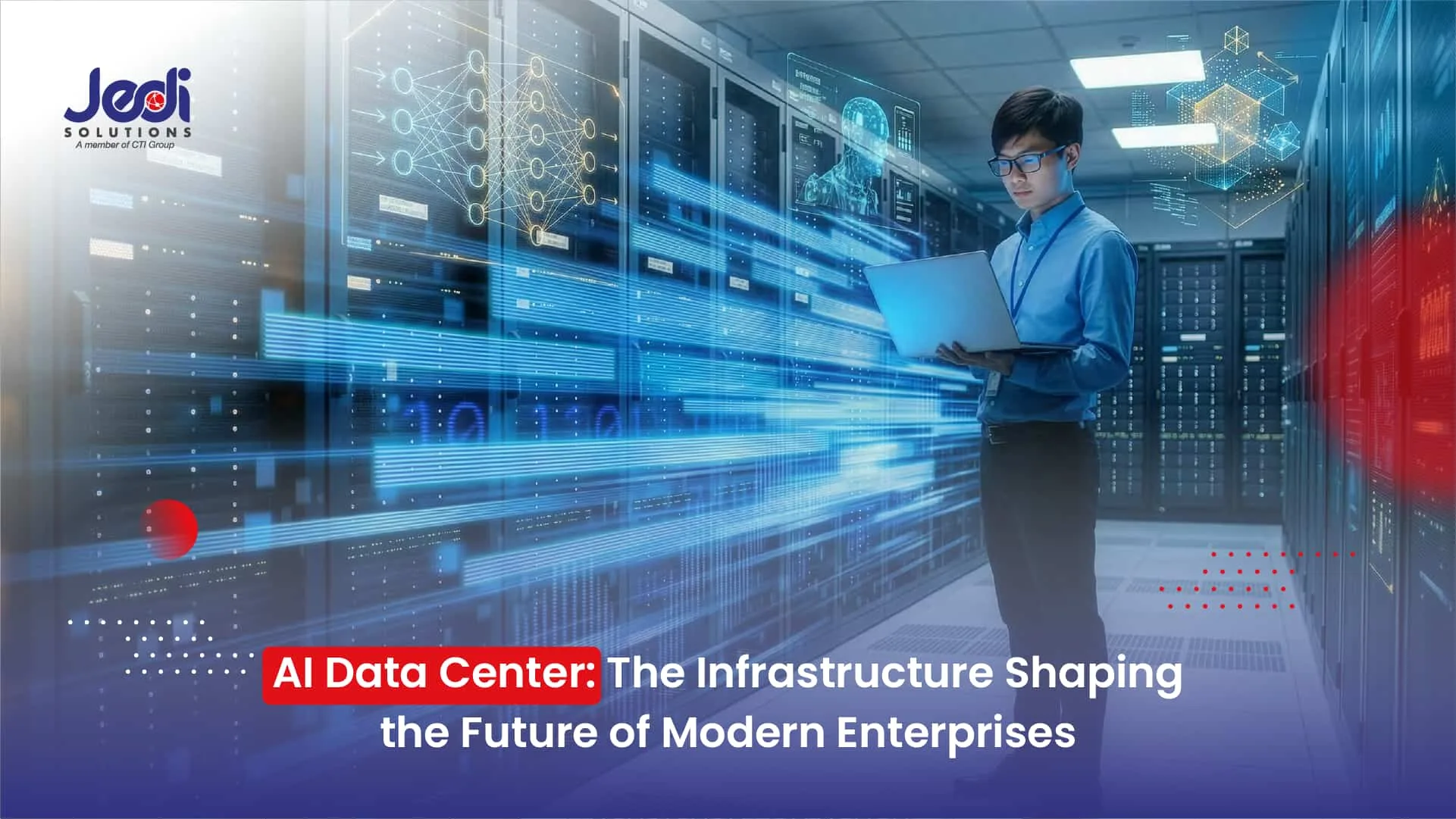

Indonesia’s data center industry is entering a phase of rapid growth. According to IMARC Group, the market is projected to expand from USD 3.1 billion in 2025 to USD 8.4 billion by 2034. This surge is closely tied to the growing demand for computing power driven by the expansion of digital services across the country.

One of the main forces behind this growth is the widespread adoption of AI across various industries. As AI begins running in production environments, the need for AI data centers is increasing significantly. Intensive workloads such as model training, data processing, and real-time inference require computing capabilities far beyond what traditional IT infrastructure was originally designed to handle.

AI 2026: From Experimentation to Operational Systems

A few years ago, AI was often treated as an experimental initiative. Organizations typically explored a handful of use cases to determine whether the technology could deliver real business value.

Today, that perspective is rapidly changing. Many companies are moving AI from pilot projects into core operational workflows, from automated customer service systems to data-driven analytics that support critical decision-making. As AI enters production environments, data centers become the foundational infrastructure that ensures these systems operate reliably and consistently.

This shift introduces new infrastructure demands. To support enterprise-scale AI operations, data centers must provide massive computing power, high-performance storage, and stable network connectivity capable of sustaining modern workloads.

How AI Is Reshaping Data Center Requirements in Indonesia

As AI adoption moves into production-scale deployments, the infrastructure requirements for data centers are evolving. Data centers must now handle far heavier computational loads compared to traditional enterprise IT systems.

These changes are becoming evident across several core components within modern data centers that must adapt to the demands of AI workloads.

Higher GPU Demand and Increasing Rack Density

AI workloads rely heavily on high-performance GPU clusters. As a result, hardware density within each rack increases significantly compared to traditional server deployments. In an AI data center environment, this naturally drives higher power consumption and requires more advanced cooling systems to maintain optimal performance.

The Growing Importance of Data Residency and Local Infrastructure

Many sectors, including banking, healthcare, and public services, operate under strict regulations requiring data to be stored within national borders. When that data is used to train AI models, local AI data center infrastructure becomes essential to ensure regulatory compliance while protecting sensitive organizational data.

AI Operations Require High Availability

Many AI-driven systems operate continuously, from customer service chatbots to real-time fraud detection platforms. As a result, AI data centers must be designed with high availability in mind to ensure digital services remain stable and uninterrupted.

<H2> Infrastructure Challenges When Companies Adopt AI </H2>

In many cases, data center environments originally designed for conventional business applications are not equipped to support the intensive computational requirements of AI.

As organizations begin building AI data center environments, several infrastructure challenges commonly emerge.

Limited Power and Cooling Capacity

GPU-based AI servers consume significantly more power than conventional servers. Without adequate power supply and cooling infrastructure, maintaining stable performance in an AI data center can become a major operational challenge.

Scalability Constraints

As AI computing demands grow, organizations often need to add GPU clusters or expand server capacity quickly. However, many internal data centers face limitations in available power, physical space, and cooling capacity, making AI data center expansion difficult.

Physical Security and Access Control

GPU servers and AI storage infrastructure represent significant capital investments. Because of this, facilities supporting AI data centers must implement strict physical security and access control measures to safeguard critical assets.

Read Also: Five Reasons Financial Institutions Are Turning Back to On-Premise Data Centers

On-Premises or Colocation: Choosing the Right Infrastructure Strategy for AI Workloads

As organizations build AI computing environments, one critical decision is selecting the most appropriate infrastructure model for their AI data center strategy. Some companies choose to build and operate their own data centers, while others rely on colocation facilities that provide ready-to-use infrastructure.

Understanding the differences between these two models helps organizations determine a more efficient AI data center strategy while maintaining the flexibility to scale as AI computing demands grow.

On-Premises Infrastructure

On-premises infrastructure provides organizations with full control over hardware, systems, and operational management of their data centers. This model is often chosen by companies with highly specific technical requirements or strict internal governance policies that require full control of their AI data center environment.

However, this approach requires substantial investment, including building physical facilities and maintaining ongoing power, cooling, and operational management.

Colocation Data Center

Colocation allows organizations to place their servers within professionally managed data center facilities that already provide enterprise-grade infrastructure. In this model, companies retain control over their servers while the facility provides the supporting infrastructure, including power, cooling, network connectivity, and security systems.

This approach is often preferred by organizations seeking faster deployment of AI data center capabilities without the need to build a new facility from the ground up.

JEDI Colocation & Data Center: Reliable Infrastructure for AI Computing

Through JEDI Colocation & Data Center solutions, organizations can run their systems in facilities designed to support modern computing requirements. The infrastructure includes high-availability architecture, 24/7 monitoring, and reliable power, cooling, and connectivity designed to support modern workloads.

This infrastructure foundation enables organizations to manage AI data center workloads more reliably while maintaining the flexibility to scale capacity as business needs evolve.

Build Your AI Infrastructure with Jedi Solutions

As part of CTI Group, JEDI helps organizations build AI data center foundations capable of supporting modern workloads. Through JEDI Colocation & Data Center services, companies can operate their systems in data center environments designed for performance, security, and scalability.

Contact us to learn how our colocation and data center services can help prepare your AI data center infrastructure for future computing demands.

Author: Danurdhara Suluh Prasasta

CTI Group Content Writer